Computing Resources

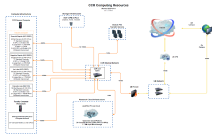

The Center for Computational Research (CCR) provides the research computing infrastructure to enable UB's faculty, staff, students, and collaborators to advance their work. A state-of-the-art high performance computing (HPC) environment along with an on-premise cloud infrastructure are available to researchers and industry partners. CCR staff have expertise in HPC systems and advanced networking, parallel programming, AI/ML, and software development to help facilitate faculty led research. CCR's research computing infrastructure includes:

On this page:

CCR's large production clusters currently provide more than 100 Tflops of peak compute capacity

High Performance Computing

The Center for Computational Research supports an extensive research computing facility that includes high performance computing (HPC) environments and an on-premise cloud infrastructure. CCR's primary HPC environment is made up of a generally accessible (to all UB researchers) Linux cluster with more than 20,000 processor cores and high-performance Infiniband networks, a subset of which contain NVIDIA GH200, H100, A100, and V100 graphics processing units (GPUs). Industrial partners have access to a separate set of resources that includes more than 5,500 processor cores and Infiniband networks. These resources also include experimental high-bandwidth memory nodes, and a subset of the nodes contain NVIDIA A100, L40S, and H100 (HGX) GPUs. CCR supports a private faculty cluster for interested research groups that need more immediate access to compute nodes than may be accessible in the general shared cluster. With the infastructure in place that mimics the primary cluster and CCR's staff expertise to manage these nodes, research groups with funding are able to access dedicated compute resources with no technical support skills required.

The Center maintains a 4.6PB Vast Data storage system accessible throughout the HPC environment. In addition, an on-premise research cloud is supported by CCR providing researchers with an alternative test environment for projects not suited to HPC. A leading academic supercomputing facility, CCR has more than 3 PFlop/s of peak performance compute capacity.

Cloud Computing

UB CCR's research cloud, nicknamed Lake Effect, is a subscription-based Infrastructure as a Service (IAAS) cloud that provides root level access to virtual servers and storage on demand. This means CCR can provide tech-savy researchers with hardware that is not part of the HPC environment that can be used for testing software and databases, running websites for research projects, conducting proof-of-concept studies for grant funding, and many other things to benefit your research. The CCR cloud is compatible with Amazon's Web Services (AWS) EC2 service to allow our users to go between the two services. More details about the Lake Effect cloud can be found here.

Remote Visualization

CCR offers dedicated compute nodes that host remote visualization capabilities for CCR users that require use of an OpenGL application GUI with access to the CCR Cluster resources. These are available through CCR's OnDemand Portal using the viz partition and qos. Additional information is available in the CCR documentation.

Storage

While users of the CCR clusters may have varying degrees of data storage requirements, most agree they need large amounts of storage and they want it available from all the CCR resources.

HPC Storage Options

In January 2021, CCR installed a Vast Data, Inc. storage solution which serves as the high reliability core storage for user home and group project directories. The storage system was upgraded in 2023 to bring the total pool of storage 4.6PB of usable storage. The storage is designed to tolerate simultaneous failures, helping ensure the 24x7x365 availability of the Center's primary storage. The Vast Data system provides the storage for /home, /projects, and /vscratch (global scratch). Home directories and project directories of academic groups are backed up daily to UBIT's off-site backup facility. Please read the backup policy to ensure you're aware of exclusions and other backup related details.

All compute nodes have local storage utilizing high performance solid state drives (SSDs) (typically 8:1 volume ratio compared to local memory). A detailed breakdown of compute node scratch configurations is available under our cluster documentation.

For additional details on the enterprise and scratch storage systems, please refer to our documentation.

LakeEffect Cloud Storage Options

Users of the on-premise LakeEffect research cloud have access to 1.7PB of Ceph block storage. This storage is accessible only through the research cloud environment. This storage is not backed up. Cloud subscriptions come with a dedicated allocation of storage and research groups can purchase additional storage, if needed. For more details on the research cloud, please refer to the cloud documentation.

Additional Info on Storage Options:

Networking

CCR maintains several enterprise level networks to handle both the high speed required in HPC but also the large datasets often generated by HPC users.

CCR Core Ethernet Network

Core networking for the Center was last updated in 2023. The Center's internal Ethernet network infrastructure is centered on an Arista 7508 100GigE core switch. It is an Enterprise class switch, with redundant supervisor engines. The switch has four 36 port 100GbE QSFP100 wirespeed line cards.

The Vast storage system is directly connected to this core Arista switch with 12 100 Gigabit uplinks. All compute nodes are connected at 10GigE or 40GigE to in-rack switches that uplink to the Arista core via 100GB or 200GB connections.

CCR Cluster Interconnects

The Center's clusters contain several high-performance low-latency Infiniband networks used for interconnecting some nodes within the clusters. See the academic cluster hardware documentation for more details and information on how to run on these particular networks.

External Networking to UB and Internet2

High-speed network connectivity is provided to the university’s networks and beyond. The University at Buffalo provides fiber-optic backbone networking between its three campuses. CCR’s data center has a 100Gb connection to UB’s 100Gb core, with internal racks connected at 100G. Off-campus connections are protected by UB's firewall and require connection to the university's VPN service. CCR offers a Globus data transfer service for transferring files to/from CCR, as well as to UBBox and Microsoft OneDrive. Globus provides secure and fast data transfer to/from the CCR storage and other endpoints around the world.

The University at Buffalo is an Internet2 member. Through New York State's advanced research network (NYSERNet), CCR and UB have access to all major high speed communication networks commonly referred to as the Next Generation Internet. NYSERNet leverages commercial connectivity for its member institutions, provides dedicated access to dark fiber, and a host of other services.

From in-state resources like Empire AI to national cyberinfrastructure assets provided by the National Science Foundation, UB researchers have an array of additional computational resources at their disposal.