Research Focus Areas

Research at the Center for Translational AI and Digital Health focuses on AI‑driven sensing, decision support and digital health platforms that can be deployed at the point of care. Our teams work across wearables, ambient sensing, mobile health and clinical decision systems to support earlier detection, more personalized interventions and more efficient delivery of care.

Through close collaboration with UBMD, Roswell Park and regional health partners, we design studies that bring these technologies into clinics and communities, generating evidence that can inform real‑world adoption.

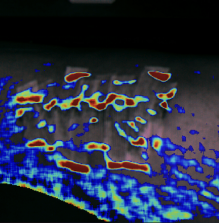

WaveSight

WaveSight OPT puts reliable clinical diagnostics into the hands of everyone for the more effective management of chronic wounds. Our lightweight and inexpensive device based on multispectral imaging technology provides perfusion maps, bacterial maps, and growth trends of the wound through a simple 2 min scan that can be performed daily. With immediate results and AI powered interpretation, abnormalities may be detected quicker and communicated better to patients and their healthcare providers. We aim to bolster patients' ability to self-manage their conditions better and improve the standard of care in community health settings where resources are already constrained. Our technology is patent pending and actively undergoing clinical studies in buffalo-based health institutions as part of a NIH/NIBIB R01 grant on novel mobile perfusion technologies.

AudioSight

AudioSight is a smartphone-based hearing screening platform that brings objective hearing assessment into the home. Unlike traditional tests that require clinic visits and active patient participation, AudioSight uses an affordable plug-in accessory and AI to measure auditory function in just minutes, with no patient response needed. This makes screening accessible to older adults and individuals with cognitive decline who are often missed by conventional methods. With one in three adults over 65 experiencing hearing loss, a leading risk factor for dementia, depression, and falls, AudioSight aims to enable earlier detection and timely intervention at scale.

SATE

SATE (Speech Annotation and Transcription Enhancer) is a project that makes children’s language assessment more accessible, efficient, and trustworthy. It helps clinicians and researchers examine speech samples in a faster and more organized way, reducing the heavy manual work that has traditionally limited this kind of evaluation. By combining automated support with expert review, SATE keeps professionals in control while improving speed and consistency. The project is designed to better support children who may have language development difficulties, enabling earlier identification, more informed intervention planning, and stronger support for their learning, communication, and long-term development.

mRehab

mRehab is a personalized stroke rehabilitation system designed to help survivors regain upper limb function from home. It combines a mobile app with low-cost, 3D-printed tools that mimic everyday objects like mugs and bowls. Using built-in smartphone sensors, mRehab tracks movement patterns in real time and provides immediate feedback on repetitions, speed, and accuracy. Patients can set personalized goals, choose from adaptive exercises across movement, rotation, and functional tasks, and monitor their progress over time. A voice-powered assistant enables hands-free control and smart coaching during sessions. Backed by over seven years of academic research, 10+ publications, and more than $1.2M in NIH and NIDLRR funding, mRehab offers an affordable, engaging alternative to traditional rehabilitation.